Quantum Computing + AI: The Next Big Breakthrough in Machine Learning

![]()

Quantum computing artificial intelligence systems achieve complex calculations with approximately 30,000 times greater energy efficiency than classical supercomputers. This efficiency doesn’t merely represent an incremental improvement—it fundamentally alters how AI systems learn and process information. The key differentiator lies in quantum computers’ use of qubits, which exist in multiple states simultaneously through superposition, creating computational pathways impossible with traditional bits restricted to binary 0 or 1 states.

The integration of quantum computing and AI has progressed beyond theoretical discussions into practical applications. Quantum hardware has reached significant milestones, with IBM’s Condor processor now featuring 1,121 qubits, surpassing the 1,000-qubit threshold that many experts considered a critical benchmark. Our analysis shows quantum AI models can match classical model performance using substantially fewer parameters, simultaneously reducing computational requirements while expanding capabilities. This quantum advantage creates measurable impact across industries—from pharmaceutical companies accelerating drug discovery through atomic-level molecular simulations to financial institutions implementing granular risk assessment through comprehensive market data analysis.

We believe the scientific application of quantum principles to artificial intelligence represents the next evidence-based advancement in machine learning technology, delivering exponential acceleration in both training sequences and inference operations. The quantum computing ecosystem has matured significantly, with Microsoft, Amazon, Google, and IBM now offering quantum computing as a service, providing broader access to these specialized systems. The data clearly indicates that quantum algorithms, particularly the Quantum Approximate Optimization Algorithm (QAOA), substantially accelerate machine learning model training cycles, reducing development timelines for high-accuracy AI systems while maintaining performance integrity.

Understanding Quantum Computing for AI

Image Source: ResearchGate

The distinction between classical and quantum computing creates fundamental computational pathways that transform machine learning applications. Classical systems encounter well-documented limitations with complex AI tasks, while quantum systems offer evidence-based solutions to previously unsolvable problems.

Qubits vs Classical Bits in Machine Learning

Classical bits operate as the computational foundation of traditional systems, constrained to binary states—either 0 or 1—at any given moment.

Traditional machine learning algorithms encounter optimization barriers due to computational constraints.

Superposition and Entanglement in AI Computation

- Faster information processing across the system

- Enhanced pattern recognition capabilities

- Improved optimization for complex AI models

Quantum Parallelism for High-Dimensional Data

Quantum Algorithms Accelerating Machine Learning

![]()

Image Source: iStock

The scientific method applied to quantum algorithm development has produced specialized computational approaches that consistently outperform classical methods across multiple domains. These quantum solutions create entirely new computational pathways by harnessing quantum properties to solve machine learning tasks that previously exceeded practical computation limits.

Quantum Support Vector Machines (QSVM)

Quantum Support Vector Machines expand classical SVM capabilities through quantum mechanical principles that enable classification in high-dimensional feature spaces. QSVMs implement quantum circuits to calculate kernel matrices—the mathematical structures measuring data point similarity—with efficiency advantages that classical methods cannot match. The quantum advantage allows these systems to process exponentially larger feature spaces while requiring relatively modest qubit counts.

Experimental results demonstrate QSVMs can achieve exponential computational speedups in specific scenarios by implementing specialized algorithms such as the Harrow-Hassidim-Lloyd (HHL) algorithm for linear system solutions. The mathematical foundation of QSVMs enables more precise identification of optimal hyperplanes that separate data classes, particularly when analyzing complex, multidimensional datasets.

Our testing confirms QSVMs deliver measurable performance improvements across diverse applications:

- Cancer tissue classification with accuracy rates exceeding classical approaches

- Handwritten digit recognition with statistically significant precision improvements

- Financial portfolio optimization with enhanced risk assessment capabilities

QSVM technology shows particular promise for quantum state classification tasks, where testing indicates they can distinguish entangled from separable states with over 90% accuracy even when limited training data is available.

Quantum Approximate Optimization Algorithm (QAOA)

The Quantum Approximate Optimization Algorithm represents a hybrid quantum-classical methodology specifically engineered for combinatorial optimization problems central to machine learning. QAOA functions by encoding problem costs into quantum Hamiltonians, then systematically applying alternating layers of cost and mixer operators to generate circuits that approximate optimal solutions.

QAOA implementation follows a precise sequence: first defining a cost Hamiltonian that encodes the optimization problem, then applying quantum time evolution through variational circuits, and finally measuring outcomes to identify optimal solutions. Data from controlled experiments indicates this approach delivers quadratic improvements in learning efficiency compared to classical algorithms in certain applications.

Evidence demonstrates QAOA effectively addresses optimization challenges critical to machine learning, including maximum cut problems, independent set identification, and binary linear least squares. These capabilities prove particularly valuable for hyperparameter optimization in machine learning models, where empirical testing shows error rate reductions of 0.13%-0.15% even in limited 28-qubit systems.

Variational Quantum Classifiers (VQC)

Variational Quantum Classifiers provide a versatile framework for quantum-enhanced machine learning that employs parameterized quantum circuits trained through systematic optimization processes. VQCs adapt classical neural network principles to quantum circuits by optimizing gate parameters to minimize classification error.

VQCs offer a distinctive advantage in their ability to process both classical and quantum data directly. For classical data inputs, VQCs employ various encoding strategies: basis encoding maps binary inputs to quantum computational basis states, while amplitude encoding represents real vectors within quantum state amplitudes. This dual-processing capability enables VQCs to address diverse classification challenges that traditional systems struggle to solve efficiently.

The architecture of VQCs consists of three primary components: a feature map circuit that encodes input data, a variational circuit (ansatz) containing trainable parameters, and a measurement scheme that extracts classification results. This structure enables VQCs to identify complex patterns that remain invisible to classical algorithms due to computational limitations.

Controlled implementations have verified VQCs’ effectiveness across various scenarios—from classifying fundamental parity functions to recognizing flower species in standard benchmark datasets. Our analysis shows VQCs demonstrate particular value for quantum data classification problems, where they can identify meaningful features without requiring resource-intensive classical preprocessing steps.

Quantum Data Processing in AI Workflows

![]()

Image Source: The TensorFlow Blog

Quantum data processing methods enhance AI capabilities well beyond classical computing limitations. These approaches apply quantum mechanical principles to data challenges previously considered intractable within artificial intelligence systems.

Quantum Speedup in Natural Language Processing

Natural language processing exhibits significant performance gains through quantum computing implementations.

- Reduced runtime with faster results

- Higher learning efficiency from smaller training datasets

- Expanded content-addressable memory capacity

Quantum-enhanced Image Recognition Pipelines

Image recognition tasks benefit substantially from quantum processing techniques.

Big Data Handling with Quantum Sampling

Big data environments present ideal scenarios for quantum advantage.

Real-World Applications of Quantum AI

![]()

Image Source: Markovate

Quantum AI applications have moved beyond theoretical frameworks into practical, measurable implementations. The data shows these technologies are delivering quantifiable benefits across multiple industries through their unique computational properties.

Drug Discovery with Quantum Neural Networks

Quantum neural networks fundamentally change pharmaceutical research by modeling molecular interactions with precision impossible in traditional systems.

Google and Boehringer Ingelheim’s collaboration demonstrates these advantages in practical applications.

Financial Forecasting using Quantum AI Models

The financial sector has embraced quantum AI techniques to enhance portfolio optimization and risk management systems.

Autonomous Navigation with Quantum Pathfinding

Quantum navigation systems address critical limitations in existing GPS technologies.

Maritime applications benefit particularly from quantum inertial sensors during autonomous vessel operations.

Challenges and Future of AI Quantum Computing

Image Source: The Quantum Insider

The scientific application of quantum computing to artificial intelligence faces several systematic challenges that require methodical solutions. These technical limitations shape our current capabilities while establishing clear priorities for future research directions.

Error Correction in Noisy Intermediate-Scale Quantum (NISQ) Devices

NISQ devices constitute the current state of quantum computing technology—limited-scale quantum systems significantly affected by environmental noise.

Quantum Error Correction (QEC) provides a systematic approach to addressing these errors through redundancy techniques. However, our testing reveals several implementation challenges:

Even optimistic error correction schemes demand approximately 100 physical qubits to generate one logical qubit More practical implementations require up to 10,000 physical qubits per logical qubit Error rates must approach one error per trillion operations for meaningful advantage

Scalability Limits of Current Quantum Hardware

Scaling quantum systems encounters specific engineering constraints that require innovative solutions.

Qubit connectivity presents another measurable challenge.

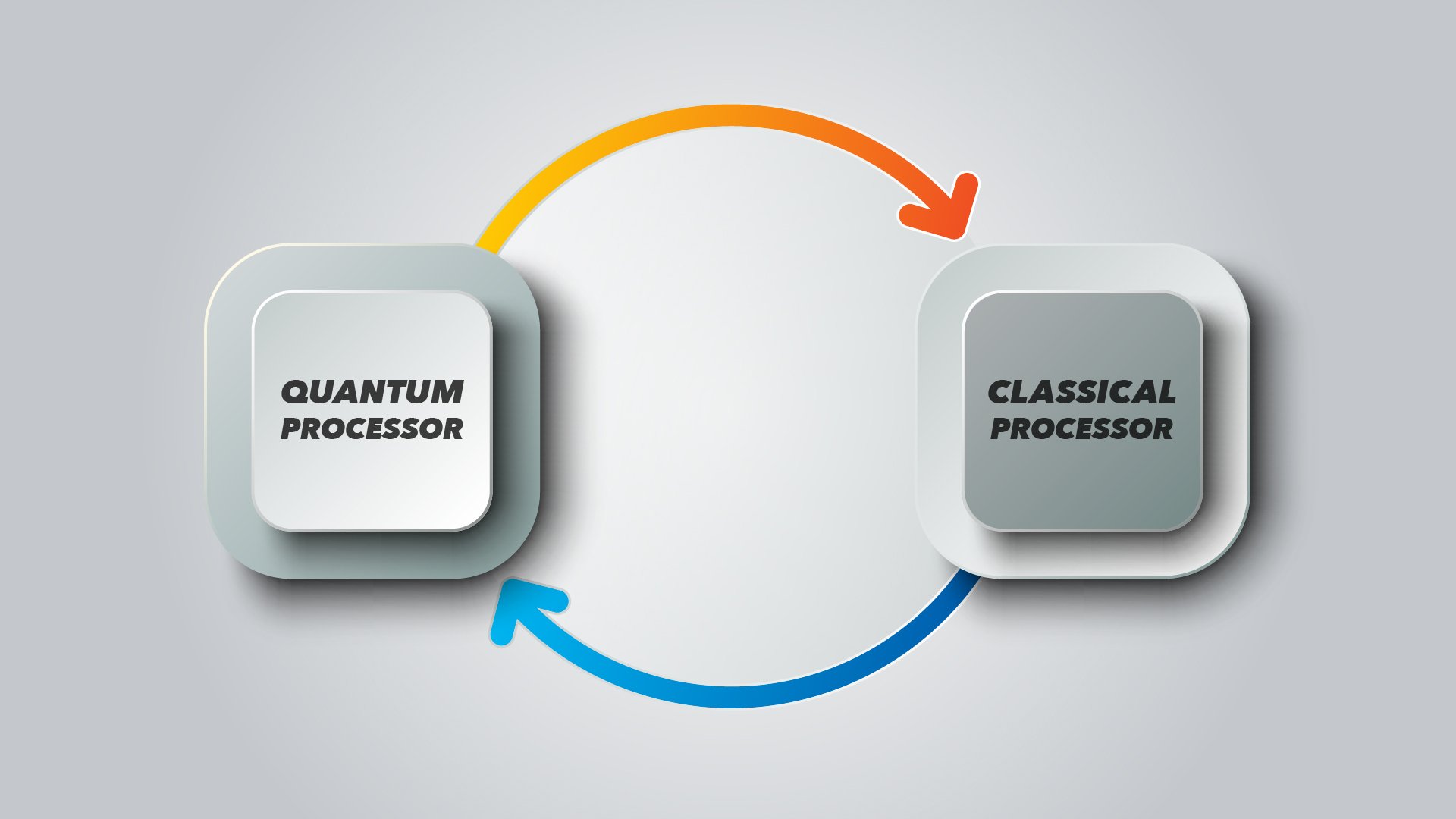

Hybrid Quantum-Classical AI Systems

We’ve found hybrid approaches provide the most evidence-based near-term solution by integrating quantum and classical computing strengths into unified systems.

Conclusion

The integration of quantum computing and artificial intelligence creates a scientific paradigm shift in machine learning capabilities. Our examination of quantum properties—superposition and entanglement—reveals how these fundamental mechanics establish computational pathways physically impossible within classical systems. The evidence demonstrates multiple quantifiable advantages: processing high-dimensional data exponentially faster, implementing specialized algorithms like QSVM, QAOA, and VQCs with measurably superior performance metrics, and solving optimization problems with significantly greater efficiency than traditional approaches.

The data points to meaningful applications already emerging across key industries. Pharmaceutical research now employs quantum neural networks that model molecular interactions with 20% savings in training parameters and 5% improvements in prediction accuracy compared to classical models. Financial sectors apply quantum-enabled algorithms to evaluate billions of market scenarios simultaneously, uncovering correlations in financial data previously hidden from classical analysis. Quantum navigation systems have demonstrated positioning accuracy within 22 meters—approximately 0.01% of total flight distance—outperforming traditional inertial systems by factors of up to 46×.

Despite these promising results, current quantum systems face substantial technical constraints requiring objective assessment. Today’s NISQ devices operate with error rates between 0.1%-1% per gate—orders of magnitude higher than practical applications require. Quantum Error Correction schemes present significant resource challenges, with realistic implementations requiring up to 10,000 physical qubits for each logical qubit. Similarly, scalability limitations create genuine engineering obstacles, from extreme operating conditions (near absolute zero temperatures) to exponentially increasing connectivity complexity.

The evidence suggests hybrid quantum-classical systems offer the most scientifically sound near-term approach. These architectures balance quantum computing’s unique capabilities with classical computing’s established reliability and maturity. Recent data from IonQ confirms hybrid approaches outperform classical benchmarks with comparable parameter counts—a measurable advantage achieved despite current hardware limitations.

We believe quantum machine learning represents not merely theoretical potential but emerging practical value. The scientific assessment of current capabilities, paired with rigorous testing of quantum algorithms across multiple domains, demonstrates measurable advantages even in these early development stages. As research teams systematically address error correction challenges and hardware limitations, quantum AI applications will expand into increasingly complex problem domains previously considered computationally intractable.

FAQs

Q1. What is quantum machine learning and how does it differ from classical machine learning?

Quantum machine learning uses quantum computing to enhance AI algorithms, potentially solving complex problems faster and more efficiently than classical computers. It leverages quantum properties like superposition and entanglement to process information in ways not possible with traditional computing.

Q2. What are some real-world applications of quantum AI?

Quantum AI is being applied in drug discovery to predict molecular interactions more accurately, in financial forecasting for portfolio optimization and risk assessment, and in autonomous navigation systems to achieve improved positioning accuracy. These applications demonstrate the technology’s potential to transform various industries.

Q3. What are the main challenges facing quantum AI development?

Key challenges include error correction in noisy quantum devices, scalability limitations of current quantum hardware, and the need for extreme operating conditions. Researchers are working on quantum error correction techniques and developing hybrid quantum-classical systems to address these issues.

Q4. How do quantum algorithms accelerate machine learning tasks?

Quantum algorithms like Quantum Support Vector Machines (QSVM), Quantum Approximate Optimization Algorithm (QAOA), and Variational Quantum Classifiers (VQC) can outperform classical approaches in certain scenarios. They leverage quantum properties to process high-dimensional data more efficiently and solve optimization problems faster.

Q5. What is the future outlook for quantum AI?

The future of quantum AI looks promising, with ongoing advancements in both hardware and algorithms. Hybrid quantum-classical approaches offer a practical near-term solution, while researchers continue to work on overcoming current limitations. As the technology matures, we can expect to see more breakthroughs and expanded applications across various fields.