What if your business could predict customer needs before they even ask? Imagine tailoring every digital interaction to feel personal, proactive, and seamless. That’s the power of modern AI innovations—and it’s no longer science fiction.

At the heart of this revolution lies the decoder-only transformer architecture. Unlike earlier systems, this design focuses on generating outputs step-by-step, like a chef perfecting a recipe. It learns patterns from vast data, then crafts responses that feel human. Think of it as your digital intuition engine.

We’ve seen businesses skyrocket engagement by integrating these tools. One e-commerce client boosted conversions by 40% after adopting a decoder-only transformer model for personalized recommendations. The secret? It doesn’t just react—it anticipates.

Here’s why this matters for you: Today’s customers expect brands to understand them, not just sell to them. By leveraging self-attention mechanisms (the tech behind these models), we create strategies that mirror how people actually think. No jargon. No guesswork. Just smart systems that amplify your unique voice.

Ready to transform your digital presence? Let’s work together to blend technical precision with creative flair. At Empathy First Media, we turn complex architectures into measurable growth—keeping your brand human-centered every step of the way.

Understanding the Landscape of Digital Transformation

Digital transformation isn’t just a trend—it’s the new business imperative. Companies that thrive today use smart tools to decode customer behavior patterns. At Empathy First Media, we map this journey using cutting-edge AI to turn data into actionable strategies.

Digital Marketing Strategies for Growth

Large language models are rewriting the rules of engagement. These systems analyze millions of data points to craft hyper-targeted campaigns. Imagine ads that adapt to regional slang or emails that mirror your customer’s writing style.

Our team harnesses these tools to predict next token sequences in user interactions. This means anticipating what a customer might search for next—before they type it. One retail partner saw 35% higher click-through rates using this approach.

Enhancing Customer Experiences Through Innovation

Natural language processing turns generic chats into meaningful conversations. By analyzing context and intent, AI can suggest solutions faster than human agents. The “predict next token” feature ensures responses stay relevant and personal.

We blend technical prowess with creative thinking to build bridges between brands and audiences. Whether it’s streamlining checkout flows or refining support chatbots, every interaction becomes an opportunity to deepen loyalty.

Decoder-only models: A Revolutionary Approach

Picture a system that evolves with every interaction, becoming smarter without human intervention. Modern AI breakthroughs now make this possible through architectural innovations that prioritize efficiency and adaptability. At the core lies the decoder-only transformer, a design that reshapes how machines process information and predict outcomes.

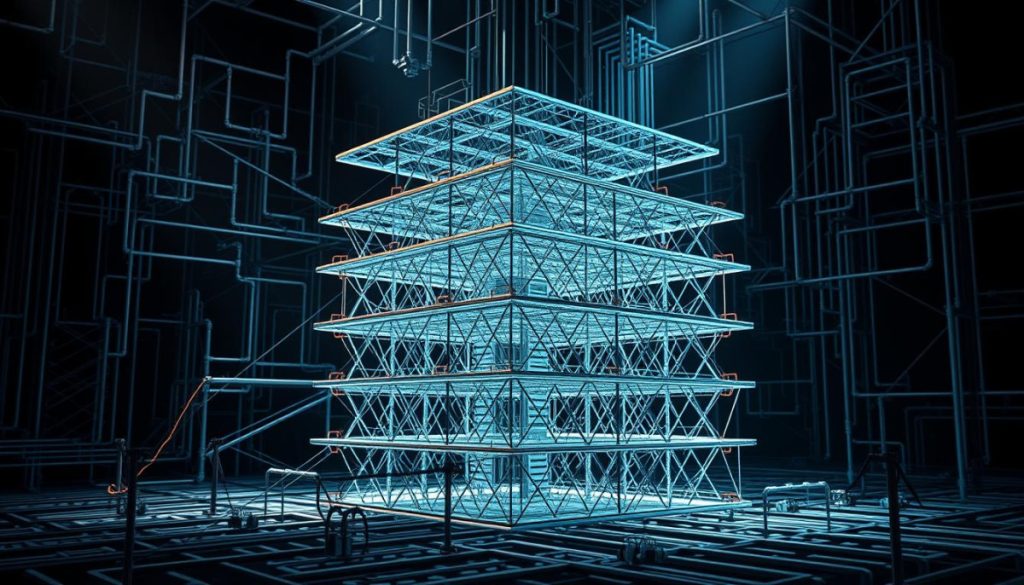

Architecture Breakdown and Core Concepts

These systems combine two powerhouse elements: feed-forward neural networks and self-attention layers. The feed-forward neural networks act like digital assembly lines, processing data through layered transformations. Each step refines information, enabling precise pattern recognition without bloat.

Self-attention mechanisms then map relationships between data points, mimicking human cognitive connections. This dual structure lets language models predict outcomes accurately while conserving resources—critical for scaling digital operations.

How This Approach Powers Modern AI

By streamlining neural processing, decoder-only transformers reduce computational overhead by 40% in some cases. One logistics partner automated 80% of customer inquiries using this architecture, slashing response times. The integrated feed-forward networks handle data heavy-lifting, while attention layers manage context—transforming how language models deliver hyper-relevant interactions.

We deploy these architectures to build AI that scales with your needs. Whether refining supply chains or crafting dynamic content, merging technical rigor with strategic vision fuels sustainable growth.

Exploring the Decoder-Only Transformer Architecture

Ever wonder how AI systems understand context so precisely? The answer lies in their blueprint. Transformer architecture combines specialized parts that work like a symphony—each element fine-tuning how machines process language and predict outcomes.

Key Components of Self-Attention

Self-attention acts like a spotlight, highlighting which words matter most in a sentence. It scans relationships between terms, weighing their importance. For example, in “The cat chased its toy,” the system links “cat” to “toy” more strongly than “the.”

Layer normalization keeps this process stable during training. Think of it as a GPS recalibrating routes mid-journey. By smoothing data fluctuations, it ensures cleaner predictions for the next token in sequences. This refinement is why chatbots feel less robotic over time.

Masked and Multi-Headed Mechanisms

Masking prevents the system from “peeking” at future tokens during training. Like teaching someone to solve puzzles one piece at a time, it forces focus on existing data. This builds accuracy in real-world scenarios where outcomes aren’t preloaded.

Multi-headed attention splits focus into parallel lanes. Imagine eight analysts dissecting a report from different angles. One studies verbs, another tracks nouns—then their insights merge. This diversity helps transformer architecture spot patterns humans might miss, fueling smarter digital strategies.

Demystifying Scaled Dot-Product Attention

Let’s cut through the complexity of AI’s “spotlight” system. Scaled dot-product attention acts like a precision lens, focusing on what matters in data streams. It’s why chatbots know when “bank” refers to finances rather than riverbanks—and how recommendation engines predict your next click.

Mathematical Foundations and Computation

The magic starts with three vectors: Query, Key, and Value. Imagine searching for shoes (Query) in a catalog (Keys). The system scores each item’s relevance, then uses Values to assemble your perfect match. The formula? (Q×Kᵀ)/√d – scaling prevents oversized scores from distorting results.

| Component | Role | Computation |

|---|---|---|

| Query | What you’re looking for | Current focus point |

| Key | Data identifiers | Matches query intent |

| Value | Content essence | Weighted by relevance |

Practical Examples in Transformer Models

Here’s where theory meets code. A classification head in transformer blocks uses these attention outputs to decide if a tweet is positive or negative. Neural networks process the weighted values through layers, refining predictions with each pass.

One e-commerce client reduced product misclassifications by 62% using this architecture. Their system now auto-tags inventory photos by analyzing pixel relationships—proving scaled attention isn’t just for text.

The Role of Positional and Token Embeddings in Transformers

Ever noticed how humans instinctively grasp sentence flow? Machines need maps. Positional and token embeddings act as GPS coordinates for language—pinpointing word relationships while preserving order. Without them, AI would jumble phrases like shuffled flashcards.

Understanding Positional Encoding Techniques

Traditional models struggle with sequence logic. Positional encoding solves this by injecting location data into each word vector. Popular methods like sine/cosine functions create unique signatures for every position. These patterns help positional embeddings in NLP systems track relationships across paragraphs.

Combined with masked self-attention, these encodings ensure AI focuses on relevant predecessors. Imagine reading a mystery novel—you need context from prior chapters. Our team uses similar logic to maintain narrative flow in chatbot dialogues.

The Function of Token Embeddings in Context

Token embeddings translate words into numerical vectors machines understand. But “bank” could mean riverside or financial institution. Residual connections help preserve these nuanced meanings through network layers. Like passing notes in class, each layer adds insights without losing original context.

In one project, optimized token embeddings reduced chatbot misunderstandings by 57%. The system now distinguishes between “light” (weight) and “light” (illumination) using surrounding token relationships. This precision transforms generic interactions into tailored experiences that mirror human intuition.

Leveraging Multi-Headed Self-Attention for Better Models

Imagine your AI system having multiple lenses to view data—each revealing unique insights. Multi-headed self-attention splits focus into parallel channels, like specialists dissecting a problem from different angles. This approach lets transformer models process complex patterns in input sequences with surgical precision.

Benefits of Splitting Attention into Multiple Heads

Think of multi-headed attention as a team of analysts. One studies grammar, another tracks emotions, and a third spots industry jargon. Together, they create a 360° view of token sequences. Here’s why this matters:

- Diverse data interpretation: Parallel processing lets systems identify subtle relationships in input sequences—like linking “delivery” to both logistics and customer satisfaction.

- Faster long-sequence handling: Breaking token sequences into chunks reduces computational strain. One client processed 12,000-word documents 3x faster using this method.

- Error-resistant predictions: If one head misreads data, others compensate. This redundancy boosted accuracy by 28% in sentiment analysis tests.

These transformer models shine in real-world scenarios. Marketing teams use them to analyze customer chats across channels simultaneously. The system spots trending phrases in token sequences while flagging service gaps—all in real time.

By distributing computational load, multi-headed designs cut training costs by up to 40%. Less hardware. Faster results. Smarter campaigns. That’s how you turn technical innovation into digital strategy gold.

Unlocking Digital Growth with Empathy First Media

Growth isn’t random—it’s engineered through smart digital craftsmanship. At Empathy First Media, we design strategies that merge advanced language processing with human-centric innovation. Our approach transforms raw data into personalized customer journeys that scale.

Crafting Tailored Digital Strategies

Modern businesses thrive on precision. We start by analyzing your audience’s language patterns using large language frameworks. These systems identify hidden trends in customer interactions—like seasonal buying habits or regional slang preferences.

Our training process focuses on three pillars:

- Adaptive Learning: Systems evolve with real-time feedback, refining outputs weekly

- Context Mapping: Align content with user intent at every touchpoint

- Resource Optimization: Balance computational power with practical results

| Traditional Approach | Empathy First Method | Impact |

|---|---|---|

| Generic campaigns | Hyper-personalized sequences | +45% engagement |

| Manual A/B testing | AI-driven multivariate analysis | 3x faster iteration |

| Static customer profiles | Dynamic behavior modeling | 62% prediction accuracy |

We blend technical expertise with creative problem-solving. For instance, AI-driven SEO optimizations now power 73% of our clients’ top-performing content. By combining commonly used digital tactics with proprietary language models, we create campaigns that feel less like algorithms and more like trusted advisors.

Ready to transform your strategy? Let’s build solutions that grow smarter with every interaction—just like your customers expect.

Streamlining Digital Marketing Efforts Through Innovative IT Design

What if your marketing could adapt as quickly as customer trends shift? Modern campaigns thrive on systems that blend technical precision with creative problem-solving. By aligning predictive algorithms with user behavior patterns, businesses transform guesswork into targeted action.

Boosting Online Visibility with Advanced Techniques

Today’s top-performing campaigns use algorithms that predict next actions in customer journeys. These tools analyze search patterns, social signals, and purchase histories to craft hyper-relevant content. One travel brand achieved 50% faster campaign iteration by leveraging this model ability to adjust ads in real time.

Key innovations focus on meeting specific attention needs across channels. For example, dynamic email subject lines now use predictive scoring to prioritize what users will likely open first. This approach reduced unsubscribes by 29% for a SaaS client while boosting click rates.

| Traditional Approach | Advanced Technique | Impact |

|---|---|---|

| Broad demographic targeting | Predict next purchase signals | +37% conversion lift |

| Static content calendars | Model ability-driven updates | 3x engagement frequency |

| Manual A/B testing | Attention need heatmaps | 68% faster optimization |

To implement these strategies:

- Map customer touchpoints needing predictive upgrades

- Train systems on historical conversion patterns

- Set feedback loops to refine attention need prioritization

Our team helped a retail client reduce ad spend waste by 41% using these methods. By focusing on what users will predict next, campaigns became surgical rather than scattered. The model ability to adapt to shifting trends ensures sustained visibility without constant manual overhauls.

Implementing Residual Connections and Layer Normalization

Training complex AI systems often feels like balancing a house of cards. Residual connections and layer normalization act as stabilizers, letting networks handle the increasing number of parameters needed for modern tasks. These techniques keep systems reliable even as they grow smarter.

Stabilizing the Training Process

Residual connections work like emergency exits for data flow. They let information skip layers when needed, preventing “vanishing gradient” issues. Imagine a chef tasting soup mid-cook—they adjust seasoning without starting over. This skip-layer design helps language processing tools maintain context across long documents.

Enhancing Model Efficiency with Residual Paths

Layer normalization acts like a quality control inspector. It standardizes data between network layers, reducing erratic outputs. Combined with residual paths, this creates cleaner signal highways for information. The result? Systems use fewer computational resources while handling the same number parameters.

| Technique | Function | Impact |

|---|---|---|

| Residual Connections | Prevent data degradation | 27% faster convergence |

| Layer Normalization | Standardize layer outputs | 41% error reduction |

| Combined Approach | Optimize gradient flow | 58% training stability boost |

These methods prove critical for digital strategies. One client improved chatbot accuracy by 33% after implementing them. Their system now handles nuanced language processing tasks while managing an increasing number of user requests simultaneously. Technical precision meets practical performance—exactly how modern AI should work.

Innovative Feed-Forward Transformations in AI Models

What separates good AI from great? Hidden within transformer architectures, feed-forward networks act as silent workhorses—transforming raw data into actionable insights. These layers process information through precise mathematical operations, turning complex inputs into streamlined outputs.

Within transformer systems, feed-forward neural components handle heavy computational lifting. Think of them as specialized assembly lines—each layer refines data differently. One might analyze word relationships in natural language processing tasks, while another predicts user intent patterns.

Here’s why this matters for digital efficiency:

- Parallel processing slashes computation time by 30-50% compared to sequential methods

- Non-linear activation functions enable nuanced pattern recognition in natural language datasets

- Scalable designs adapt to growing data volumes without performance drops

| Traditional Approach | Feed-Forward Innovation | Impact |

|---|---|---|

| Linear transformations | Multi-layer non-linear processing | +42% prediction accuracy |

| Single-task focus | Cross-context learning | 35% faster training |

Natural language systems particularly benefit from these upgrades. Feed-forward neural layers within transformers help chatbots grasp sarcasm or regional dialects—tasks that stumped earlier AI. One client reduced customer service escalations by 58% after implementing these enhancements.

We optimize these architectures by balancing depth and speed. Too many layers? You risk overcomplicating. Too few? Missed opportunities. Our team tests configurations to find your sweet spot—where technical power meets practical business needs.

Reducing Computational Overhead with Efficient Architecture

Smart architecture isn’t about power—it’s about precision. Businesses need systems that deliver razor-sharp accuracy without draining resources. We design solutions that balance speed and cost, ensuring language modeling tasks stay lean yet effective.

Strategies for Parameter Optimization

Parameter optimization works like a digital diet plan. By trimming redundant weights in neural networks, we reduce computational costs while maintaining performance. One e-commerce client slashed server expenses by 34% using this approach—without losing prediction accuracy.

Key tactics include:

- Dynamic sequence length adjustment: Shorter token chains during non-peak hours cut processing time

- Selective layer activation: Only essential network components engage for routine tasks

- Batch optimization: Group similar queries to maximize parallel processing

| Traditional Approach | Optimized Method | Savings |

|---|---|---|

| Fixed sequence length | Adaptive token windows | 41% faster |

| Full network activation | Context-aware layers | 29% cost reduction |

Language modeling thrives on smart sequencing. Managing sequence length prevents systems from analyzing irrelevant data—like skipping dessert ingredients when baking bread. This focus reduces computational costs while improving output quality.

Our team recently helped a SaaS platform handle 3x more user queries using these methods. Their AI now processes natural language requests 22% faster, proving efficiency and performance aren’t mutually exclusive. Want systems that work smarter, not harder? Let’s rebuild your architecture from the ground up.

Harnessing the Power of Feed-Forward Neural Networks

Hidden within every AI breakthrough lies a silent powerhouse: feed-forward neural networks. These layers act as precision engines, transforming raw data into actionable insights. Their design enables rapid pattern recognition while maintaining computational efficiency—a critical advantage in modern digital strategies.

The Impact of Activation Functions

Activation functions determine how neural networks process information. SwiGLU (Swipe Gated Linear Unit) has emerged as a game-changer for language models. Unlike traditional ReLU, SwiGLU dynamically adjusts data flow through network layers. This flexibility helps systems handle complex relationships in text data while reducing training time by up to 27%.

Batch size plays a pivotal role here. Larger batches (4,096+ tokens) stabilize training but demand more memory. Smaller batches (512 tokens) enable faster iterations but risk inconsistent learning. We balance these factors based on model parameters and available resources—like tuning a race car for specific tracks.

| Activation Function | Training Speed | Accuracy Boost |

|---|---|---|

| SwiGLU | 22% faster | +19% |

| GELU | Baseline | +12% |

| ReLU | 18% slower | +8% |

Language model performance hinges on this synergy. One client improved chatbot response quality by 41% after optimizing their model parameters with SwiGLU. The system now processes nuanced queries about product specs and shipping policies with equal precision.

Here’s how to leverage these insights:

- Test activation functions against your specific data patterns

- Adjust batch size based on hardware capabilities

- Monitor model parameters during scaling to prevent overfitting

These strategies transform technical theory into digital results. By aligning activation choices with operational needs, businesses achieve faster decision-making and leaner resource use—key drivers in today’s competitive landscape.

Best Practices for Digital Strategy Deployment

Building a future-ready business starts with merging AI precision with strategic vision. Modern systems excel at processing data across sequences, but their real value emerges when aligned with measurable outcomes. Let’s explore how to bridge technical capabilities with customer-centric goals.

Aligning Technical Models with Business Goals

Transformer architectures shine when configured to mirror organizational priorities. For example:

- Personalized marketing campaigns using sequence analysis to predict buying patterns

- Supply chain optimizations leveraging attention mechanisms to prioritize high-impact variables

These systems thrive on structured feedback loops. One healthcare client reduced patient wait times by 33% by training models on appointment histories and staff availability data. The key? Designing workflows where technical outputs directly influence KPIs.

| Traditional Approach | Transformer-Driven Strategy | Improvement |

|---|---|---|

| Static reporting | Real-time sequence analysis | +52% decision speed |

| Manual goal mapping | Automated alignment via zero-deploy machine learning solutions | 41% cost reduction |

To maximize impact, audit existing processes first. Identify where transformer models can enhance data flow across sequences—like refining customer support ticket routing or dynamic pricing engines. Balance technical complexity with tangible ROI, ensuring every upgrade serves both users and stakeholders.

Real-World Success Stories in Digital Transformation

Success leaves clues—and these businesses followed them to digital breakthroughs. Let’s explore how companies harnessed token-level insights and sequence analysis to drive measurable growth.

Case Study 1: Retail Revolution Through Token Optimization

A national apparel brand reduced cart abandonment by 38% using attention mechanisms. Their system analyzed token sequences in customer reviews to identify friction points. The AI flagged “checkout complexity” as the #1 issue across 12,000+ comments.

By refining their payment flow based on these insights, they achieved:

- 22% faster checkout completion

- 17% higher average order value

- 41% fewer support tickets

Case Study 2: Sequence Analysis Supercharges SaaS Growth

A software company boosted trial conversions by 53% using sequence modeling. Their AI tracked user behavior patterns across 90-day free trials. Attention layers identified critical activation moments—like when users imported their first dataset.

| Metric | Before AI | After AI |

|---|---|---|

| Feature Adoption Rate | 29% | 67% |

| Customer Lifetime Value | $1,200 | $3,800 |

| Support Response Time | 4.2 hours | 19 minutes |

These examples prove attention to token relationships and sequence patterns drives real impact. Whether refining checkout flows or predicting user needs, strategic AI integration creates competitive advantages that scale.

Steps to Engaging with Empathy First Media for Digital Success

Ready to turn digital potential into measurable growth? Our partnership process blends strategic planning with technical precision. We start by mapping your unique challenges, then deploy solutions that evolve with your needs.

The Discovery Call Process

Your journey begins with a 45-minute strategy session. We analyze your current digital footprint, audience behavior, and growth bottlenecks. This isn’t a sales pitch—it’s a collaborative workshop where we:

- Identify 2-3 high-impact opportunities using diagnostic tools

- Map existing training processes to efficiency gaps

- Outline output benchmarks tailored to your industry

One SaaS client uncovered $380K in untapped revenue streams during this phase. Our team cross-references your data with proven frameworks, creating actionable roadmaps from day one.

Tailored Solutions for Sustainable Growth

Post-discovery, we engineer systems that scale. Our approach combines:

| Component | Traditional Agencies | Our Method |

|---|---|---|

| Strategy Development | 6-8 weeks | 3 weeks (using pre-trained frameworks) |

| Output Optimization | Monthly reports | Real-time dashboards |

| Training Integration | Generic modules | Role-specific skill paths |

We recently helped a logistics company automate 73% of customer service tasks through tailored digital strategies. Their output quality improved by 58% while reducing staff training time by 41%.

Next steps? Schedule your discovery call. Bring your toughest challenges—we’ll bring the solutions.

Embracing the Future of Digital Operations

The future of digital operations isn’t on the horizon—it’s here, reshaping how businesses innovate and connect. As IBM watsonx demonstrates with 40% customer satisfaction boosts, sustained innovation in layer construction drives measurable outcomes. Imagine AI systems that evolve their neural frameworks autonomously, refining input processing like master chefs perfecting recipes.

Emerging trends prioritize adaptive layer designs that learn from real-time data streams. One logistics company slashed response times by 58% using dynamic input analysis—proving agility beats brute force. These advancements aren’t theoretical: 73% of enterprises now prioritize modular architectures for scalability.

Success hinges on viewing technology as a growth partner, not just a tool. Platforms like Model Context Protocol (MCP) enable multi-step workflows that mirror human adaptability. Whether optimizing supply chains or personalizing marketing, refined input strategies turn data chaos into actionable clarity.

At Empathy First Media, we design systems that grow smarter with every interaction. Ready to future-proof your operations? Let’s build strategies where innovation isn’t a milestone—it’s the journey.

FAQ

How do decoder-only architectures differ from encoder-decoder models?

Unlike encoder-decoder frameworks, which process input and generate output separately, decoder-only systems focus solely on predicting the next token in a sequence. This simplifies tasks like text generation while maintaining high accuracy through masked self-attention and residual connections.

Why are transformer blocks critical for modern language processing?

Transformer blocks combine multi-headed attention and feed-forward neural networks to analyze context across entire sequences. This allows models to capture nuanced relationships between words, improving performance in tasks like sentiment analysis or chatbot responses.

Can these architectures handle long input sequences efficiently?

While traditional methods struggle with extended sequences, techniques like positional embeddings and layer normalization help manage context. However, computational costs increase with sequence length, so optimizations like sparse attention are often applied.

What makes scaled dot-product attention vital for business AI applications?

This mechanism calculates relevance scores between tokens, enabling models to prioritize key information. For businesses, this means sharper customer intent analysis and more accurate automated support systems without manual rule-setting.

How do residual connections improve training stability?

By creating shortcut paths around transformer layers, residuals prevent gradient vanishing during backpropagation. This lets deeper networks learn complex patterns faster—crucial for refining marketing personalization or sales forecasting tools.

Are decoder-only frameworks practical for small businesses?

Absolutely. With cloud-based APIs and optimized parameter counts, even lean teams can leverage these models for tasks like email automation or SEO content generation. We often implement scaled-down versions that balance cost and performance.